You're probably arguing with a bot (and losing)

The spicier the social media account, the larger the reach, the more influence it has.

I never thought I’d say this, but it’s an exciting time to be on X (Twitter) … again.

Recently, the site launched a new feature called “about this account,” which shows where accounts on X were created. And it turns out some of the most popular MAGA-branded social media accounts were created outside of the U.S. in places like Eastern Europe, Thailand, Nigeria, and Bangladesh. Many of these countries are known to harbor bot farms. (There are a few hundred Democratic Socialists X accounts that were created in countries like Portugal, but NBC News reports the main Democratic Socialist account is verified.)

X’s head of product, Nikita Bier, said the feature was deployed to help users verify the authenticity of the content they read on the platform. For years, X has struggled with bot accounts. After this new feature launched, there was an uproar on X with users questioning where and how X pulled the location data, which Bier responded by saying the location accuracy is nearly 99.99%.

This isn’t a political newsletter. This is a newsletter about attention in the age of a digital economy. But where we put our attention today is largely political. This revelation from X is important because it shows how engagement (i.e., our attention) can and is being manipulated by outside forces masquerading as your next-door neighbor.

Bots pushing partisan political content isn’t a new revelation. During the 2016 U.S. presidential election period, bot accounts on Twitter and other social media platforms were allegedly used by a Russian government operation in an attempt to influence the election’s outcome. These bot accounts were mostly targeting highly partisan Republicans.

These bots were successful at driving engagement because of a fundamental flaw with social media: algorithms accelerate strong emotions. Feelings like hate, fear, and anxiety are some of the strongest emotions out there. Paulo Coelho famously writes: “If you want to control someone, all you have to do is to make them feel afraid.” And that’s what we’re seeing happen to people who are online or watching a lot of cable news content; they are programmed to fear.

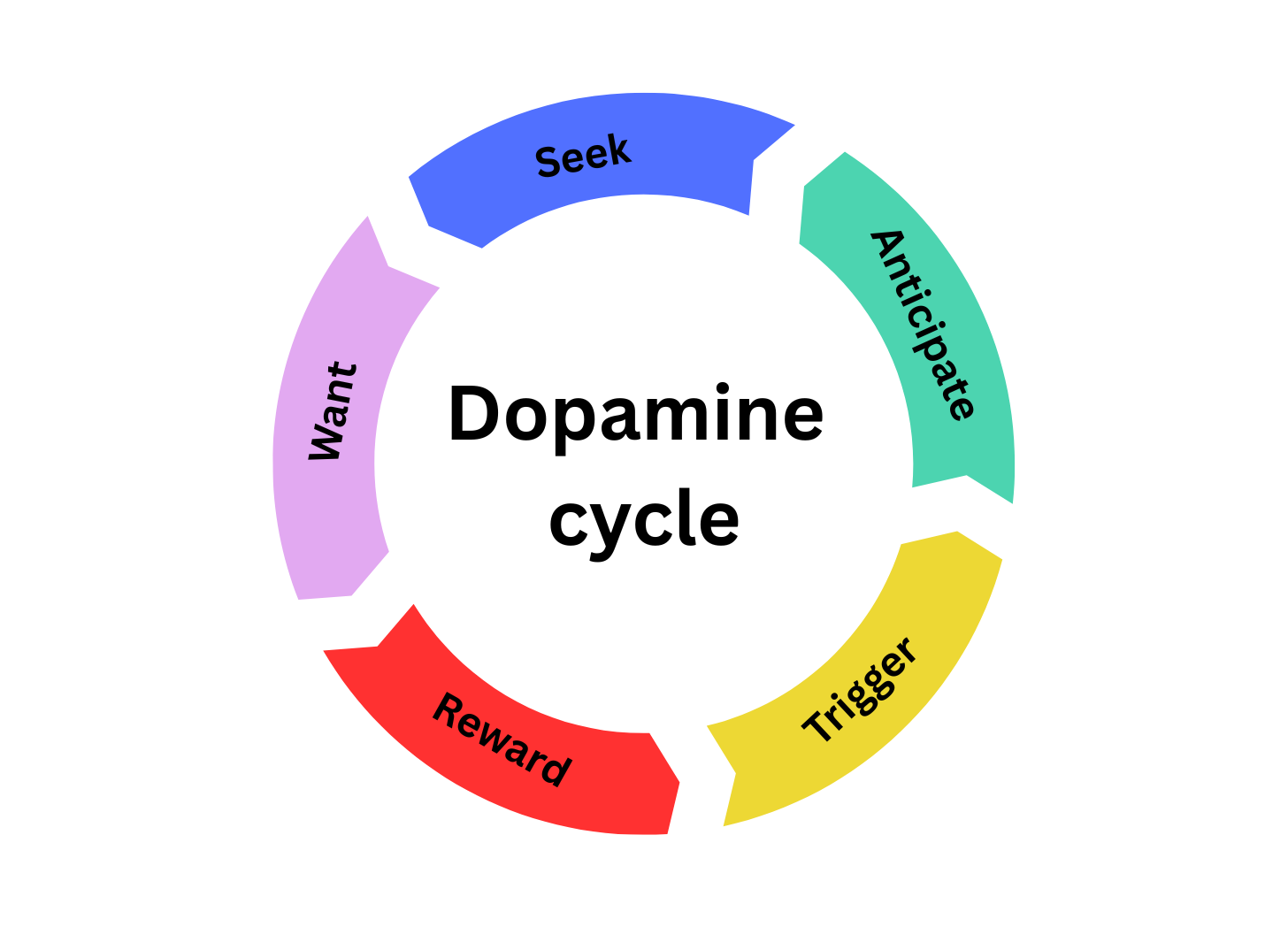

The dopamine cycle hamster wheel

Before we dive deeper into fear, let’s talk about pleasure through the dopamine cycle, which is a brain reward system. A stimulus, like a hug, can trigger a dopamine release, and it creates a sense of pleasure. We like experiencing pleasure, which motivates us to repeat any action that gives us that satisfaction.

Social media exploits the dopamine cycle. Think about every time you scroll on TikTok. It’s exciting to see new content. We derive pleasure from the novel and give it our attention. But like anything, we can become desensitized, so over time, we need more extreme content to trigger that dopamine release.

But if dopamine is happy feelings, how is it related to anxiety, anger, and hate? In the brain, our dopamine cycle and fear cycle are interconnected. Dopamine creates and extinguishes fear memories. When we watch something scary, we’re experiencing fear conditioning. Dopamine helps us learn that fear, and when that threat is eliminated, we experience “fear extinction,” which gives us a sense of pleasure. That’s why some people love watching scary movies, for example.

So how does this link to social media, bots, politics, and your attention?

Well, social media uses algorithms to push extreme content to keep us engaged on the platform. Media programming on cable news channels does the same thing. There’s a reason why newspaper editors used to say, “If it bleeds, it leads,” because people are drawn to scary, extreme, and dangerous things. It doesn’t mean we’re bad; it just means we’re human, and social media platforms, bots, engineers, marketers, politicians, and salesmen know how to pull the levers.

There’s a subreddit called r/FoxBrain, where concerned families and friends of people addicted to Fox News converge to talk about ways of deprogramming their relatives. A common theme people discuss in this subreddit is how fear-based media keeps their loved ones trapped in a cycle of anxiety. They describe their parents or relatives as being lost in a form of addiction, and that’s what happens when the dopamine and fear cycles are exploited; they become gateways to addiction.

Manually retrain your FYP

How do we break out of these dopamine and fear cycles? Honestly, it’s hard. Here are my 3 imperfect solutions:

1. Natively search specific topics you want to see on your feed

Limiting screen time is touted as a solution, but let’s be real, if I’ve got downtime between meetings or I’m having lunch alone, there’s a good chance I’m scrolling through TikTok. Therefore, I’ve taken a lot of pain in curating a specific type of content on my social media feeds. On TikTok, I primarily watch cat and cooking videos; sometimes the cats are cooking, which is peak wholesome content. If I see political content on your FYP (For You Page), that’s because I probably Googled about that topic, and my search information is now dictating what I’m seeing on my social media feeds. When that happens, I have to retrain my FYP to stop sharing that type of content with me. I override the FYP by manually searching the content I want to see in the platform’s native search. I will type phrases like “cozy cat videos” and “girl dinner ideas.”

2. Block accounts that post extreme content

I also use the block feature quickly and often. If I come across an account that’s sharing extreme content, I’ve made a habit of blocking those accounts, especially extreme political content. When you block someone, you signal to TikTok to show you less content from that creator, and the algorithm will use this information to adjust future recommendations. (It doesn’t even have to be political. I’ve blocked accounts that publish “stunt food,” food that is created specifically to generate rage. Think deep frying a block of Velveeta. Yeah, I don’t need to watch that.)

3. Actively engage with creators and content you want more of

Finally, like, comment, and share the content you do want to see more often. I think I’ve saved around 10,000 adorable kitten videos. Despite doing that, I still have to remind the algorithm every few weeks to give me wholesome content because it will start to stray on different topics when it senses I’m not staying on the app for as long as I usually do.

As you can see, it’s a lot of technological gymnastics. Social media platforms count on you being passive so they can serve you content that is the most engaged, which is usually the most polarizing.

The “botification” of social media

The biggest way I’ve been able to scale back my social media usage is by changing my mindset of who is on these apps and what they want me to do. I’ve long had a theory that social media is controlled by three things: engineers, salesmen, and bots. And with this new feature from X, I think I’m more correct than ever.

If you’ve ever argued with someone on X or TikTok, there’s a good chance that if you go to their profile, you’ll see very limited information on there. The account is usually newish, has no original posts, doesn’t have a profile photo, or is using AI-generated photos. And the more extreme a person’s comments and text-based posts are on social media, the higher the likelihood that the person is a bot. These accounts are trained to literally argue with people; that’s what makes them powerful, that’s what gives their point of view credence. If a bot can trigger you and make you engage with it, then it’s already won. The next time a social media user is making you upset, remember, it’s likely a bot. Block them.

Why can’t we get rid of bots?

Blocking individual bots isn’t a long-term solution. The death of bots would be better. But why can’t we get rid of them?

Well, there isn’t an incentive for social media platforms to delete bots because they are often a source of revenue. Yep, that’s something your social media manager probably didn’t mention to you, right? Bots, especially the spicy, partisan ones, can inflate user and engagement metrics. The higher the engagement numbers, the more attractive a channel or creator is for advertisers.

The other thing social media founders don’t like mentioning is that getting rid of bots is hard. Removing fake accounts isn’t a one-time action; it requires continuous effort, manpower, and platform oversight – it’s a big investment with little financial payoff. Therefore, it’s in the platform’s best interest if social media users like you and me accept the status quo.

So, we have a choice here. We can let the bots tell us how to think, feel, shop, and vote, or we can take more control of our social media life by actively influencing and retraining our feeds.

Me? I like thinking for myself, so I’m going to keep playing technological gymnastics with our social media overlords who are doing whatever it takes to control my attention.